The New Data: Embracing Secure AI Frameworks in Cybersecurity

In the first section of our recently published eBook, Exponential Thinking For Technology Adoptions, we emphasized the need for businesses to adapt their data, technology systems, and organizational structures as they prepare for exponential GenAI integration. Let’s delve deeper into this concept, focusing on the unique challenges and opportunities that data presents in the realm of cybersecurity.

As AI becomes more integrated into various applications, the nature of the data it uses and generates is evolving, necessitating a fresh approach to cybersecurity.

Traditional data paradigms are being challenged, and the new kinds of data that AI employs, including model data and prompts, require innovative strategies to ensure security and privacy.

Understanding AI Model Data

GenAI model data encompasses the datasets used to train, validate, and test AI models. This data is crucial as it directly influences the performance and accuracy of AI systems. Unlike conventional datasets, AI model data often includes vast, diverse, and complex information, ranging from structured datasets like spreadsheets to unstructured data such as text, images, and videos. The volume and variety of this data present unique challenges for cybersecurity.

Protecting model data involves ensuring its integrity, confidentiality, and availability. Any compromise in this data can lead to biased, inaccurate, or malicious outputs from AI systems. For instance, adversarial attacks can introduce subtle manipulations into training data, causing AI models to behave unpredictably or make incorrect decisions. Therefore, robust security measures, including advanced encryption, secure data storage, and rigorous access controls, are essential to safeguard the data.

The Role of Prompts in AI Systems

Prompts are another critical component in the functioning of AI, particularly in natural language processing (NLP) models like OpenAI’s GPT-4. Prompts are the inputs given to AI systems to elicit desired responses. They can range from simple questions to complex instructions and significantly impact the quality and relevance of the outputs.

The security of prompts is vital as they can inadvertently reveal sensitive information or be exploited for malicious purposes. For example, malicious actors can craft prompts to extract confidential data or manipulate AI systems to generate harmful content. Ensuring the security of prompts involves not only protecting them from unauthorized access but also implementing filters and checks to prevent the use of harmful or deceptive inputs.

New Security Paradigms for AI Data

Given the unique characteristics of AI model data and prompts, traditional cybersecurity approaches may fall short. The dynamic and interactive nature of AI systems requires a more agile and proactive security framework. This is where concepts like the OODA Loop (Observe, Orient, Decide, Act) and the Vitality Score come into play. These approaches emphasize continuous monitoring, rapid adaptation, and proactive threat mitigation.

The OODA Loop encourages organizations to observe their AI systems continuously, orient themselves with the latest threat intelligence, swiftly decide on appropriate security measures, and act decisively to counteract threats. The Vitality Score provides a measure of an organization’s resilience and ability to adapt to changing threats, highlighting areas for improvement, and ensuring ongoing preparedness.

Embracing the Future of Secure AI

By rethinking cybersecurity through frameworks like the OODA Loop and the Vitality Score, organizations can enhance their resilience and agility in the face of AI-driven threats. Embracing these new paradigms will ensure that the benefits of AI can be harnessed safely and securely, paving the way for a future where AI-driven productivity and innovation can thrive without compromising security.

As AI continues to evolve, our approach to data security must keep up. By adopting innovative strategies and frameworks, businesses can protect their AI systems from emerging threats and ensure a secure and prosperous AI-driven future.

Conclusion: Understanding AI Model Data

GenAI model data is essential for AI performance and accuracy but presents unique cybersecurity challenges due to its complexity. Protecting this data from bias, inaccuracies, and malicious manipulation requires advanced security measures.

Prompts in AI systems like GPT-4 also need safeguarding to prevent sensitive information leaks or exploitation.

Traditional cybersecurity approaches may fall short. Innovative frameworks like the OODA Loop and the Vitality Score, emphasizing continuous monitoring and proactive threat mitigation, are crucial.

Key Takeaway: Adopting new security paradigms enhances resilience and agility, ensuring AI systems are safe, secure, and effective as technology evolves.

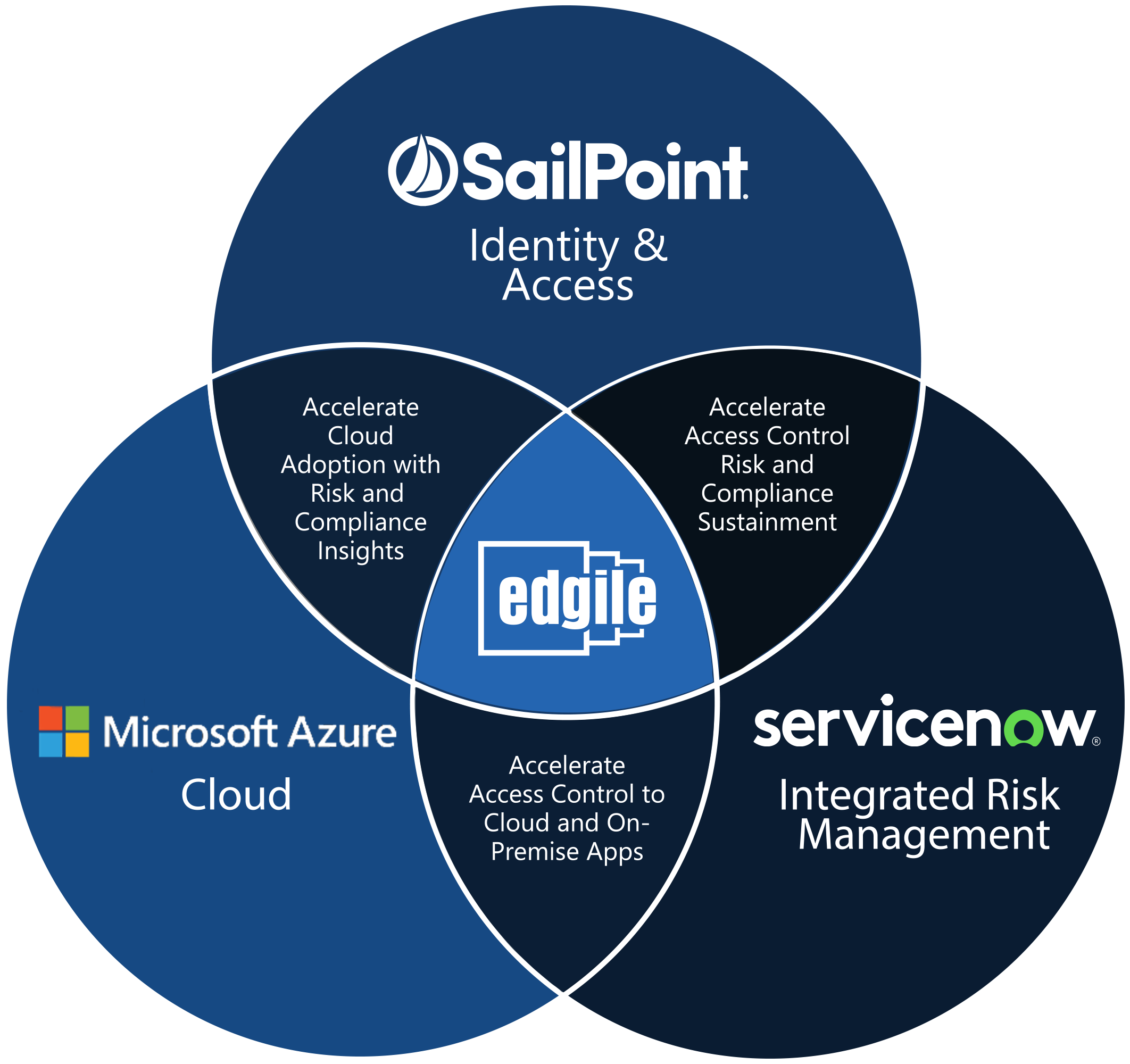

Connect with Edgile to get started

For details on how to optimize your information security programs, please contact your Edgile representative.